CQRS Simplified the Design. It Complicated Production.

CQRS simplifies design but complicates production. Eventual consistency, read model lag, and failing event pipelines create stale data and hidden failures. What looks clean in architecture reviews often breaks under real-world load.

This is part of The True Code of Production Systems. The series is about the decisions that only become visible when something breaks in production.

The architecture review went well.

The team had inherited a monolithic .NET application sitting on a single Azure SQL database. Reads and writes were competing for the same tables. Reporting queries were locking rows that the order processing service needed. Dashboard endpoints were slow because they were running against the same database that was handling live transactions. The system was not broken, but it was visibly straining, and the team knew that the next stage of growth would expose the cracks.

CQRS was the recommendation. Separate the write side from the read side. Commands go through a dedicated write model. Events flow through Azure Service Bus. Read models get projected from those events and stored in a separate Azure Cosmos DB optimised for query patterns. The design looked clean on paper. Implementing CQRS in production, the team would discover, was a different conversation entirely. The concerns were separated. The architecture diagram made sense to everyone in the room.

The system went live eight months later. Three months after that, the on-call rotation started filling up with incidents that the architecture was supposed to prevent.

The Arrow on the Diagram Is Not a Promise

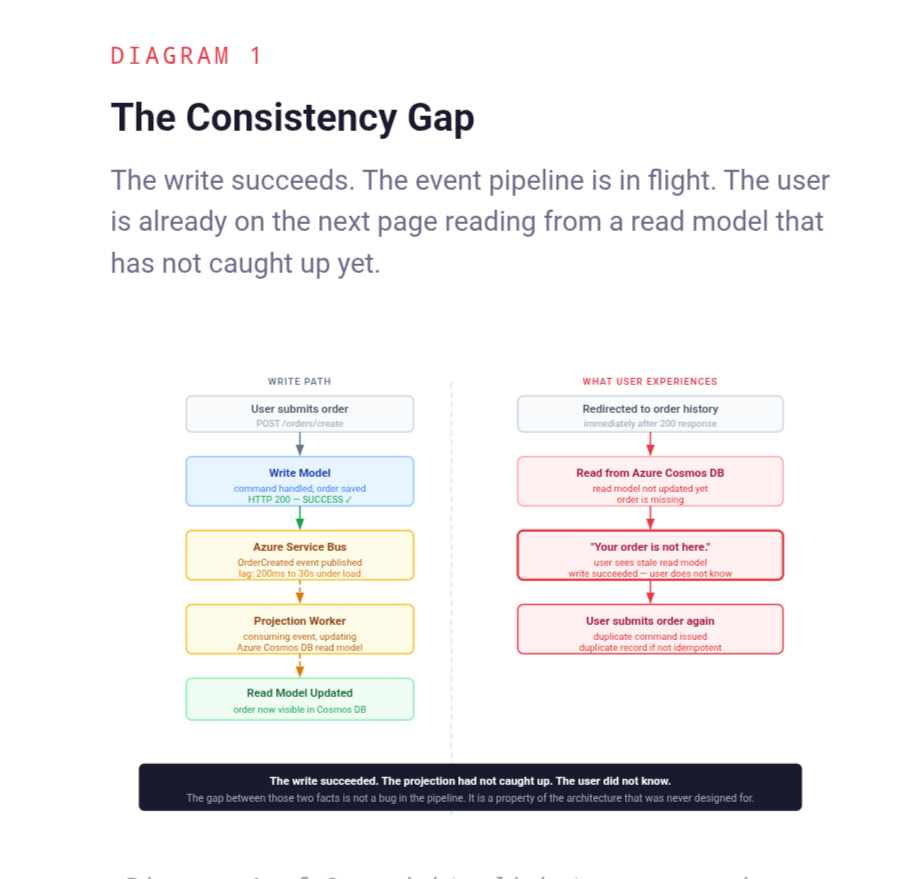

The architecture diagram showed the write model publishing an event, the event flowing through Azure Service Bus, and the projection worker updating the read model. Clean, logical, separated. Everyone in the review understood it.

What the diagram did not show was time. The arrow between the write model and the read model looks instantaneous on a whiteboard. In production it is not. It is a pipeline with its own throughput, its own backpressure, its own failure modes, and its own latency that varies with load. On a quiet morning it might be two hundred milliseconds. During a deployment, a Service Bus partition rebalance, or a spike in write volume, it can be seconds. After a projection worker restart with a large backlog, it can be minutes.

The system that was designed around that arrow being fast discovered, in production, that fast is not the same as guaranteed. And the entire user experience built on top of the read model was built on that assumption.

Ask yourself: in the CQRS system running in production right now, how long does it take for a completed command to appear in the read model? Has that number ever been measured under load? Does the business know it exists?

The Gap Between the Write and the Read: CQRS Eventual Consistency in Practice

Every CQRS system has a lag between when a command completes and when the read model reflects the result of that command. This is CQRS eventual consistency - not a bug, not a misconfiguration, but a fundamental property of the pattern. In a well-functioning system at low load, that lag is measured in milliseconds and is practically invisible. In a system under pressure, during a deployment, after a Service Bus partition rebalance, or when the projection worker is catching up after a restart, that CQRS read model lag can stretch to seconds, minutes, or longer.

The application was not designed to handle this gracefully. The write side returned success. The user was redirected to a page that read from the read model. The read model had not caught up yet. The page showed stale data. The user, who had just completed an action they expected to see reflected immediately, saw no evidence that anything had happened.

This produces a specific and predictable support ticket: the user reports that the action did not work. They try again. The command runs twice. Depending on whether the command handler was written to be idempotent - and in the early versions of most CQRS implementations, it was not - the duplicate command either fails with a confusing error or succeeds and creates a duplicate record.

The architecture had separated reads from writes. It had not separated the user's expectation from the system's consistency model. That gap did not appear on the diagram. It appeared in the support queue. It is the same category of problem as a health check that says the service is up while the service cannot actually serve requests - the system reports one thing, the user experiences another, and the gap between those two things is where the incident lives.

Ask yourself: in the user journeys where a command is followed immediately by a read, what does the user see if the read model has not caught up? Has that scenario been designed for, or is it handled by hoping the projection is fast enough?

When the Event Pipeline Fails Silently: The Projection Worker Problem

The write model and the read model staying in sync depends entirely on the event pipeline between them. That pipeline is a runtime dependency with its own availability, its own throughput limits, and its own failure modes. Most teams treat it as infrastructure and assume it is working. Production reveals that assumption regularly.

Azure Service Bus is reliable. It is not infallible. A partition rebalance during a deployment can cause a brief period where messages are not being consumed. A misconfigured dead-letter threshold can cause the projection worker to stop processing without raising a visible alarm. A poison message - one that causes the projection handler to throw an exception on every attempt - can block an entire partition while the worker retries it indefinitely, leaving every subsequent event queued behind it. Projection worker monitoring is not optional in a CQRS system. It is the difference between knowing the pipeline is stalled and discovering it from a business report three hours later.

In each of these cases, the write side of the system continues to function normally. Commands are accepted, business logic executes, writes succeed. The read model quietly falls behind. The gap between what happened and what the system reports happened grows with every command that is processed while the projection pipeline is stalled.

The particularly damaging version of this is when the stall happens slowly. Not a hard failure that triggers an alert, but a gradual slowdown - the projection worker processing slightly fewer events per second than the write side is producing. The read model drift starts at seconds, grows to minutes, then to hours. By the time it is noticed, the read model is materially wrong and the event backlog is large enough that catching up will take longer than anyone wants to acknowledge. This is the event-driven equivalent of a cache that is evicting data slightly faster than it can be rebuilt - degradation that is invisible until it is suddenly very visible.

A background job with no owner and no monitoring is one of the most dangerous things in a production system. A projection worker is exactly that unless the team has deliberately built monitoring around it.

Ask yourself: if the projection worker stopped processing events right now, how long would it take to notice? Is there an alert on projection lag? Is there an alert on dead-letter queue depth?

Rebuilding the CQRS Read Model Is Not a Simple Operation

One of the theoretical advantages of CQRS is that read models are disposable. Because the write side retains the full event history, any read model can be dropped and rebuilt from scratch by replaying all events. This is presented as a feature - the ability to add new read models, fix bugs in projections, or change the schema of a read model without affecting the write side.

In practice, replaying a production event history against a live system is a careful operation that most teams underestimate the first time they do it.

An event store that has been accumulating events for two years may contain tens of millions of records. Replaying those events through the projection handlers, writing the results into Azure Cosmos DB, and doing all of this while the write side is still producing new events requires coordination that the original implementation almost certainly did not plan for. If the replay runs in production against the live read model, users see inconsistent data during the rebuild window. If it runs against a separate instance, there is a cutover moment where the system switches from the old model to the new one - a moment that needs to be handled carefully to avoid serving stale data from the old model after the switch.

The team that built the original system usually discovers this the first time a bug in the projection logic requires a full rebuild. The rebuild takes longer than expected. It saturates Azure Cosmos DB request units in ways that affect live read traffic. This is the same dynamic as scaling the application layer when the database is the bottleneck - the operation that was supposed to fix the problem adds pressure to the layer that cannot absorb it. The event replay produces side effects in projection handlers that were not designed to be run twice - sending notifications, updating external systems, triggering workflows that should only fire once per event. Idempotency in event handlers is not optional in a CQRS system. It is the foundation on which the entire read model rebuild capability depends, and it needs to be designed in from the beginning, not retrofitted after the first rebuild incident.

Ask yourself: if the read model needed to be rebuilt from scratch today, what would happen? How long would it take? What would users see during the rebuild? Would any projection handler produce side effects that should not happen twice?

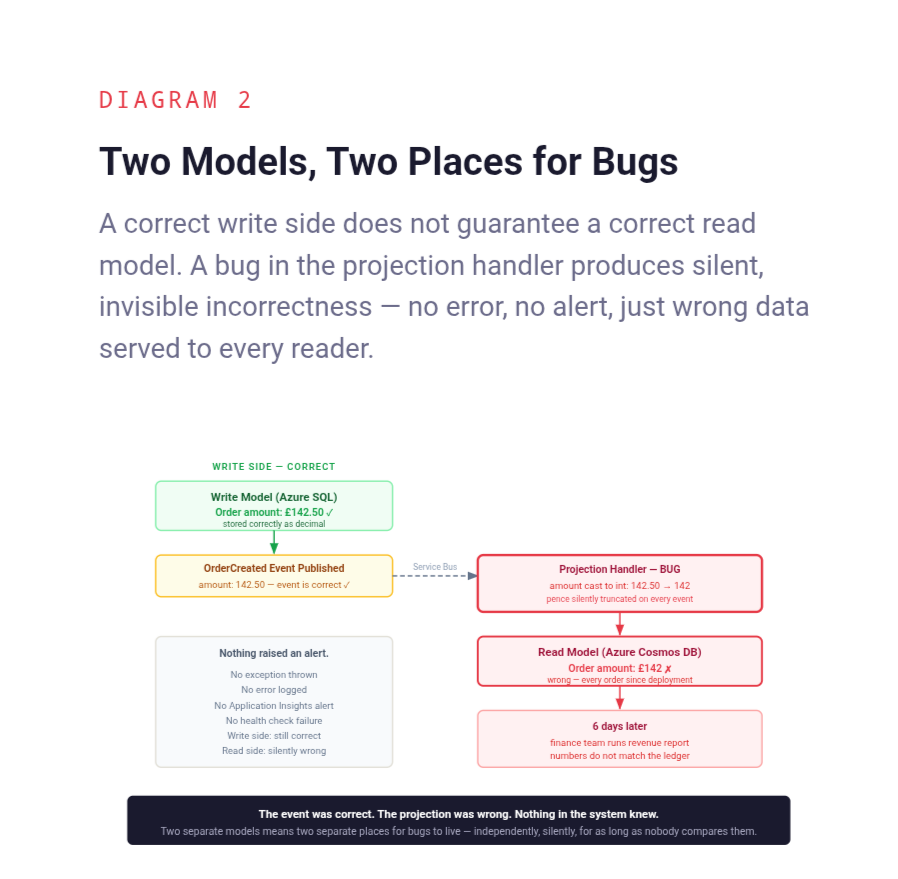

Two Models Mean Two Places for Bugs to Hide

Before CQRS, a query against the database returned what was in the database. The write and read paths shared the same data, the same schema, the same validation. A bug in the write path was visible when you read the data back. A schema change applied once.

After CQRS, the write model and the read model are separate code, maintained separately, deployed separately, and potentially owned by separate teams. A bug in the projection logic can silently produce incorrect read model data while the write model remains completely correct. The system looks healthy. The write side is functioning. The read model is quietly wrong in ways that may not be obvious until someone runs a business report and notices numbers that do not add up.

This is a class of production bug that is structurally harder to detect than most. The write side has no visibility into what the projection is doing with the events it produces. The read side has no ability to verify that the data it is serving is consistent with the write side. The only way to catch divergence is to build explicit reconciliation - periodic jobs that compare write side state to read model state and raise an alert when they do not match. Most teams do not build this until after the first incident that would have been prevented by it.

Ask yourself: if there is a bug in a projection handler today that is producing incorrect read model data, what would surface it? Is there any reconciliation between the write model and the read model, or is the read model trusted unconditionally?

The Operational Cost Nobody Budgeted For

The architecture review that recommended CQRS focused on the design benefits. It did not produce an estimate of what the pattern would cost to operate.

Running a CQRS system in production means running and monitoring the write model, the read model, the event pipeline, and the projection workers - each of which is a separate operational concern. Azure Service Bus needs to be monitored for dead-letter depth, message age, and processing lag. Azure Cosmos DB needs to be monitored for request unit consumption, partition key distribution, and storage growth. Projection workers need health checks, restart policies, and lag alerts. The event store needs retention policies and archival strategies as it grows.

None of this is unreasonable. But none of it is free, and the team that adopted CQRS to simplify the system often finds themselves operating a system that is significantly more complex to run than the monolith it replaced. The complexity did not disappear. It moved - from the query layer into the operational layer, where it is less visible during development and more painful during incidents.

A system that requires more operational effort than the team budgeted for is a system that produces more incidents than the team planned for. The architecture decision and the operational budget are the same decision. Most teams make them separately.

Ask yourself: for the CQRS system running in production, is there a documented runbook for each of the following: projection worker is down, Service Bus partition is rebalancing, read model needs to be rebuilt, dead-letter queue is growing? If those runbooks do not exist, the system is being operated on institutional knowledge.

Back to the Architecture Review

The team from that architecture review eventually got the system to a stable state. It took longer than the migration had taken. It required adding projection lag monitoring, dead-letter alerts, and a reconciliation job that ran nightly and compared write side aggregate counts to read model counts. It required retrofitting idempotency into projection handlers that had not been written with replay in mind. It required several difficult conversations with the product team about why users occasionally saw stale data immediately after completing an action.

None of that work appeared in the original architecture proposal. The proposal showed what the system would look like when it was working correctly. Production showed what the system looked like when the assumptions behind the design were tested.

CQRS is a legitimate pattern for systems with specific scaling and query flexibility requirements. The teams that use it successfully are the ones that go into the decision with clear answers to the questions this article has raised. The teams that use it as a general-purpose simplification technique discover, in production, that they have traded one set of problems for a different set - and the new set came with a larger operational bill than anyone anticipated.

The design was simpler. The system was not.

You May Also Like

- System Thinking Is Not Architecture — And That’s Why Most Architects Get It Wrong

- The First Thing You Should Do in a Production Incident Is Not What You Think

- Caching Is Easy. Production Caching Is Not.

The True Code of Production Systems is a series about the decisions that only become visible when something breaks in production.

Read the full series at The True Code of Production Systems